We harness the power of high performance computing (HPC) through scalable, cost-effective, specialized environments from computers to buildings and facilities. Because computing is integral to most LLNL missions, we must predict and develop the HPC advances necessary to meet mission and user needs. Livermore Computing (LC) is home of the computational infrastructure that supports the advancement of HPC capabilities.

Hardware at the Edge of the Possible

Our hardware enables discovery-level science. The unique, world-class, balanced environment we’ve created at LLNL has helped usher in a new era of computing and will inspire the next level of technical innovation and scientific discovery.

LC delivers well over 3 exaflops of compute power, massive shared parallel file systems, powerful data analysis platforms, and archival storage capable of storing hundreds of petabytes of data. We support a broad range of diverse and dynamic computing architectures to address multiple missions and security levels.

Advanced Technology Systems

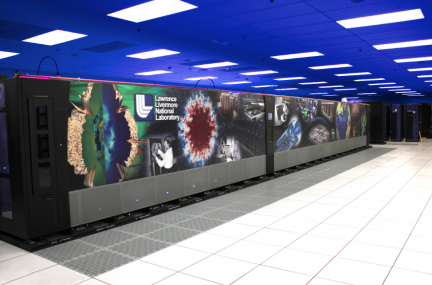

Our advanced technology systems—such as the National Nuclear Security Administration’s El Capitan and its early access and unclassified sibling machines—are used to run large-scale multiphysics codes in a production environment. Our advanced architecture systems explore technologies at scale with the intent that matured technology can be used as a basis to design future production resources.

El Capitan performs complex and increasingly predictive modeling and simulation for NNSA’s vital Life Extension Programs (LEPs), which address weapons aging and emergent threat issues in the absence of underground nuclear testing.

Commodity Clusters

We deploy commodity technology systems in a wide range of sizes, suited to a variety of scientific workflows. These systems leverage industry advances and open-source software standards and many are customized with large memory nodes, GPUs, or other accelerators. These clusters are modular in nature and they provide a common programming model. Hardware components are carefully selected for performance, usability, manageability, and reliability, and are then integrated and supported using a strategy that evolved from practical experience. LLNL has deployed dozens of very large Linux clusters since 2001—some of the largest on the planet—Dane with 382 petaflops and Jade with 344 petaflops.

Emerging Architectures

We also deploy specialized visualization, high-memory, and big data machines and artificial intelligence accelerators, two such systems are the SambaNova accelerator and the Cerebras wafer-scale engine.

For more on our work with these promising new technologies, click the Emerging Architectures topic here or on any related project, news article, or people highlight.

File Systems and Storage

Our archival storage, including the world’s largest TFinity tape archive, is massive, storing nearly 150 pebibytes of data. We also support and contribute to the development of the open-source Lustre parallel file systems. Lustre is mounted across multiple compute clusters and delivers high performance, global access to data.

HPC Software Leadership

Graphics and visualization

Turning raw data into understandable information is a fundamental requirement for scientific computing. LC manages an array of visualization options, including in-house developed tools, commercial products, and support and promotion of open standards and software. Visit LC’s visualization software page to learn more.

Open-source software

Much of our software leverages the collective expertise of the HPC ecosystem, making the cost of entry to HPC affordable. LLNL has developed and deployed open-source tools across the entire software stack—operating systems, resource management, performance analysis, scalable debugging, data compression, and more. Major initiatives like the Exascale Computing Project and the Advanced Simulation and Computing program rely on open-source software. Visit software.llnl.gov to learn more.

Proxy applications

Proxy apps represent the HPC workload we care about while still being small enough to easily understand and try out new ideas. LLNL is one of several DOE labs building proxy apps to facilitate a two-way communication pipeline with vendors and researchers about how to evaluate trade-offs in future architectures and software development methods.

HPC tools

LC supports several tools aimed at maximizing the efficiency of software deployment, platform management, and HPC center administration. Our resources include compilers, debuggers, memory checking, profiling and tracing, and Linux cluster management. Visit LC’s software pages to learn more.

View our projects, news, and people highlights related to HPC systems and software.