Access to one supercomputer is a boon for researchers, but access to multiple supercomputers is even better. An LLNL team has received a Department of Energy (DOE) Office of Science funding award, which provides time on key high performance computing (HPC) systems in the DOE complex. The allocations are provided as node-hours—i.e., hourly computation on a designated number of hardware node(s)—to mission-driven projects under the Advanced Scientific Computing Research (ASCR) Program.

Led by LLNL computer scientist Harshitha Menon, the team is evaluating large language models (LLMs) in HPC programming settings. These researchers from Livermore, Oak Ridge National Laboratory (ORNL), the University of Maryland (UMD), and Northeastern University are building HPC-LLM tools to assist with software development.

Menon’s team received 700,000 node-hours divided among two exascale systems—ORNL’s Frontier and Argonne National Laboratory’s Aurora—and the petascale Perlmutter system at the National Energy Research Scientific Computing Center. These ASCR Leadership Computing Challenge allocations expand the project’s scope beyond Livermore’s Tuolumne supercomputer.

“Our goal is to leverage the machines to further our work in improving the productivity of HPC developers with LLMs. This includes porting codes, conducting performance analysis, understanding how LLMs reason, and building HPC-LLM agents,” Menon explains. The extra nodes will help the team scale compute-intensive tasks such as reinforcement learning, while ensuring their solutions can adapt to different types of computing architectures. (A different hardware vendor built the processors on each of these systems.) These efforts are supporting the Productive AI-Assisted HPC Software Ecosystem project, also known as Ellora—a $7 million, three-year collaboration funded by the DOE Office of Science’s ASCR AI for Science initiative.

Multi-Pronged Research

With just one year to use these HPC resources, the team is tackling the research in multiple ways. Perlmutter and Aurora allocations will bolster interpretability studies into how LLMs formulate and explain their responses. Frontier will be used for fine-tuning and training LLMs to generate better code. The training data consists of pull requests that LLM agents have submitted for merging into a code base.

Additional work includes integration of compilers and performance tools into an agentic workflow as well as feedback loops designed to improve agent performance. Menon notes, “We want to improve the context and information given to the LLM agent so that when it generates code, the result is better-performing code that aligns with HPC needs.”

The open-source package management software Spack provides a useful testbed for the project’s intertwined efforts. Users build and install their HPC application codes with Spack and can create new packages as their code’s dependencies grow. “Spack is a large repository with thousands of files and lines of code that give context to an LLM agent,” says Menon. For example, a Spack agent could generate a package and learn from success or failure during installation.

Another investigation involves low-resource programming languages such as Fortran or Julia. Arjun Guha, who leads Northeastern University’s effort alongside David Bau, explains, “These languages are understudied in the research community, which tends to focus on Python. There’s limited training data, which forces us to be more creative when developing the capabilities of LLM agents to do useful tasks in these languages.”

Meanwhile, UMD associate professor Abhinav Bhatele oversees the optimization of inference engines—how they scale in distributed environments and how they serve AI models to users. In other words, can the engine’s serving mechanism deliver high-throughput results regardless of HPC hardware? The UMD team built a prototype system called YALIS for use in inference engine experiments, with a goal of identifying and mitigating performance bottlenecks.

“Access to multiple supercomputers allows us to test our optimizations on different hardware architectures and enables us to ensure that our codes are performance portable,” says Bhatele, who directs UMD’s Parallel Software and Systems Group. “One challenge will be to demonstrate that the AI models can explain and improve the performance of large, complex scientific codes in a production environment—and not just that of simple toy benchmarks and proxy applications.”

Tom Goldstein, UMD professor and director of the Maryland Center for Machine Learning, is leading the development of open-source LLMs and public training datasets to augment HPC coding. Although commercial coding assistance tools exist, Goldstein notes the incompatibilities with using them in unique HPC settings. He states, “These systems generally make your code visible to a number of third parties, which introduces challenges for confidential government work, healthcare, banking, and other areas where privacy and data security are paramount.” Building well-trained, open-source alternatives to these tools will enable users to host their own coding pipelines.

People Power

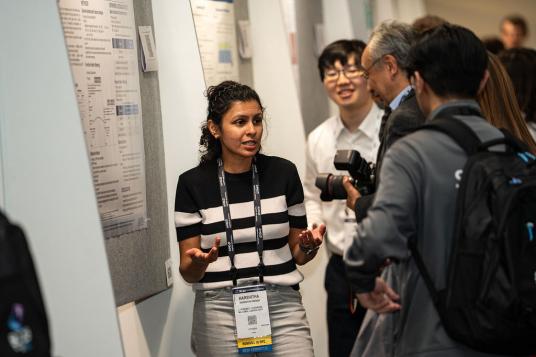

The project team at LLNL grew this year to include Fernbach Fellow Daniel Nichols, who is integrating performance tools into an agentic workflow, while Spack developer Caetano Melone builds an agent that generates Spack packages from an existing code base. Postdoctoral researcher Gautam Singh has also joined the team to develop models that provide actionable feedback for the agentic loop. Iowa State University student Md Mahbubur Rahman helped with agentic interpretability during his Data Science Summer Institute internship—an effort recognized as a Best Poster Award finalist at the International Conference for High Performance Computing, Networking, Storage, and Analysis (SC25) in November. Several of the team’s papers are out for publication and additional conference consideration.

For Guha, the collaboration provides access to unique computing resources unavailable at universities. “Speaking to project members at national labs helps keep us focused on problems that matter,” he adds. Bhatele agrees, “This is a great opportunity for graduate students to tackle real-world issues. For the national labs, the project provides a different perspective on addressing these problems from an academic viewpoint as well as a hiring pipeline to attract young talent to support the national mission.”

Collectively, the team is primed to face the unknowns of the burgeoning HPC-LLM field. “When working with large transformer models, nothing ever works the way you think it will,” Goldstein points out. For example, a supercomputer may not yet be configured to run workloads efficiently, and a model may not work with a specific dataset. When these challenges arise, he continues, “It becomes quite clear why our team has LLM-focused researchers working with HPC-focused teammates. Colleagues help us build solutions that span the whole HPC stack, from high-level applications to low-level routers and switches.”

Additionally, the LLNL team will contribute to the DOE’s new Genesis Mission via the Transformational AI Models Consortium led by Argonne National Laboratory. Menon notes, “The tools developed as part of our project—spanning agentic workflow, performance optimization, and code porting—will be critical for accelerating AI-assisted HPC code development under this initiative.”

— Holly Auten

Machine photos above are courtesy of Argonne National Laboratory, ORNL, and the National Energy Research Scientific Computing Center.