Browse this site's news, projects, and people highlights via any of the topics in the dropdown list or below each content description.

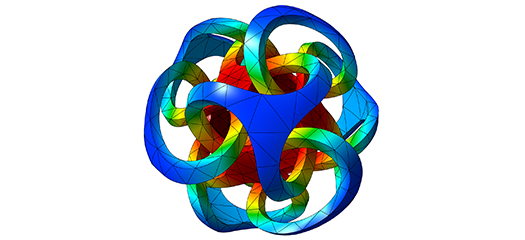

GLVis

GLVis is a lightweight tool for accurate and flexible finite element visualization that provides interactive visualizations of general FE meshes and solutions.

SUNDIALS

This project solves initial value problems for ODE systems, sensitivity analysis capabilities, additive Runge-Kutta methods, DAE systems, and nonlinear algebraic systems.

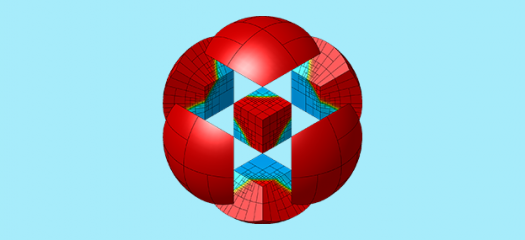

MFEM

The open-source MFEM library enables application scientists to quickly prototype parallel physics application codes based on PDEs discretized with high-order finite elements.

Robert Stephany

As Computing’s eighth Fernbach Fellow, postdoctoral researcher Robert Stephany will develop specialized algorithms under the mentorship of Youngsoo Choi.

Tarik Dzanic

As Computing’s seventh Fernbach Fellow, postdoctoral researcher Tarik Dzanic will develop new algorithms and test them in computational physics simulations under the mentorship of Bob Anderson.

Andrew Gillette

CASC computational mathematician Andrew Gillette has always been drawn to mathematics and says it’s about more than just crunching numbers.

ICML25 acceptances

LLNL researchers have posters and workshop papers accepted to the 42nd International Conference on Machine Learning on July 13–19.

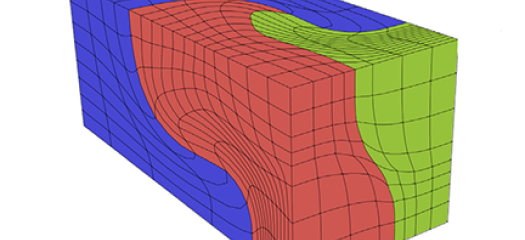

Robust evaluation of PDE solutions on high-order meshes

A new mathematical technique improves the computational efficiency of evaluating the solution in large-scale, high-order meshes on advanced HPC systems.

Mapping cosmic shear to illuminate dark energy

In a recent study published in the Astrophysical Journal, LLNL researchers developed an innovative approach to map cosmic shear using linear algebra, statistics, and HPC.