LLNL Computing’s ground-breaking research and development activities, innovative technologies, and world-class staff are often featured in various media outlets.

Source: LLNL Computing

NLIT 2026 event calendar

LLNL staff are heading to the National Laboratories Information Technology Summit (NLIT) on May 4–7.

Access Management AI/ML Cloud Services Collaboration and Productivity Tools Cyber Operations Cyber Security Data Management Data Science Databases/IT Infrastructure Events HPC Systems and Software Information Technology Network Engineering Software Build and Installation

Source: LLNL News

LLNL to harness quantum computing for next-generation magnets

The Quantum Computing for Computational Chemistry (QC3) program seeks to develop and apply quantum algorithms to accelerate simulations of chemistry and materials science to advance commercial energy applications.

AI/ML Data Science Emerging Architectures Information Technology Materials and Manufacturing Quantum Computing

Source: LLNL News

Big Ideas Lab podcast highlights HPC4EI

The Big Ideas Lab dives into the High Performance Computing for Energy Innovation program, a collaboration between national labs and private industry that tackles complex industrial challenges with computational science.

Computational Science Critical Infrastructure HPC Systems and Software Materials and Manufacturing Multimedia

Source: LLNL Computing

ICLR26 acceptances

LLNL researchers have several posters and papers accepted to the 13th International Conference on Learning Representations on April 23–27.

AI/ML Data Science Deep Learning Events ML Theory Natural Language Processing Scientific ML

Source: LLNL News

LLNL-led study uses machine learning, veterans’ health records to identify ALS drug-repurposing candidate

By combining causal-inference methods with machine learning, researchers evaluated 162 medications to identify drugs prescribed for other conditions that were associated with meaningful differences in survival.

Source: LLNL Computing

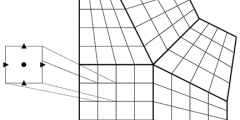

Better solvers, better precision with HYPRE v3

The latest chapter in the nearly 30-year history of hypre includes a new semi-structured algebraic multigrid solver and support for mixed numerical precision at runtime.

Source: The Innovation Platform

Livermore Computing: accelerating excellence in high performance computing

To learn more about the work taking place at Livermore Computing and the potential this has for a wide range of real-world applications, The Innovation Platform spoke to LLNL’s Deputy for High Performance Computing, Judy Hill.

AI/ML Data Science Emerging Architectures HPC Architectures HPC Systems and Software Hybrid/Heterogeneous Open-Source Software

Source: LLNL News

LLNL, Meta co-develop groundbreaking polymer-chemistry dataset for training AI models

In a pioneering partnership to accelerate materials discovery with AI, researchers from LLNL and Meta have created the world’s largest open dataset of atomistic polymer chemistry.

AI/ML Computational Science Data Management Data Science Materials and Manufacturing

Source: LLNL Computing

Supercomputer allocations boost HPC-LLM project

An LLNL-led team is using 700,000 node-hours of Department of Energy HPC resources to improve developer productivity.

AI/ML Data Science Exascale HPC Architectures HPC Systems and Software Natural Language Processing Performance, Portability, and Productivity Software Engineering

Source: LLNL News

HPC4Mfg program awards funding to three LLNL-industry collaborations

The HPC for Manufacturing program is part of the broader HPC4EI initiative, which LLNL manages for DOE. HPC4Mfg connects U.S. companies with national lab expertise and computing resources to address complex manufacturing challenges using advanced modeling and simulation.

Collaborations Computational Science Materials and Manufacturing Transport

Source: LLNL News

Finding resonance: How LLNL expertise is amplifying collaboration in quantum computing

A DOE investment unites experts from national labs, universities, and industry to advance the next generation of quantum computing, communication, and sensing technologies. LLNL brings a deep expertise in materials and microwave cavities to the table.

Source: LLNL Computing

SIAM PP26 event calendar

Our researchers will be well represented at the SIAM Conference on Parallel Processing for Scientific Computing (PP26) on March 3–6. SIAM is the Society for Industrial and Applied Mathematics with an international community of more than 14,000 individual members.

AI/ML Biology/Biomedicine Co-Design Compiler Technology Computational Math Computational Science Data Science Discrete Mathematics Emerging Architectures Exascale HPC Systems and Software PDE Methods Quantum Computing Seismology Solvers

Source: Science & Technology Review

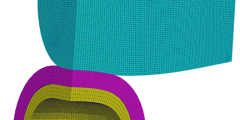

Supercomputing in sync

The National Nuclear Security Administration’s high-performance computing environments rely on a unified, scalable operating system.

Cluster Management HPC Architectures HPC Systems and Software Hybrid/Heterogeneous

Source: LLNL News

Fentanyl or phony? ML algorithm learns to pick out opioid signatures

LLNL scientists initiated and led a cross-disciplinary team that developed a machine learning model to distinguish opioids from other chemicals.

Source: LLNL News

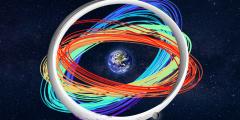

Simulations and supercomputing calculate one million orbits in cislunar space

In an open-access database and with publicly available code, LLNL researchers have simulated and published one million orbits in cislunar space.

Source: LLNL News

LLNL makes Glassdoor’s 2026 ‘Best Places to Work’ list

The Lab ranked 12th out of 100 large employers in the United States, marking the seventh recognition the Laboratory has earned in Glassdoor’s award program since its inception in 2009.

Source: LLNL News

LLNL’s Lindstrom honored with IEEE VIS Test of Time Award

Computer scientist Peter Lindstrom received the 2025 IEEE VIS Test of Time Award for his 2014 paper on near-lossless data compression, recognizing its lasting influence on the field of scientific visualization and HPC.

Awards Data Compression Data Movement and Memory Data Science HPC Systems and Software Scientific Visualization

Source: Data Science Institute

AI in eight pages: Bridging technology to policy through science

LLNL, in collaboration with the California Foundation for Commerce and Livermore Lab Foundation, has released a new report to help California lawmakers navigate the fast-moving AI landscape.

Source: LLNL Computing

Livermore hosts HPSS User Forum for the first time in 33 years

In early November, Lawrence Livermore National Laboratory hosted the High Performance Storage System (HPSS) User Forum, marking the first time in the collaboration’s 33-year history the global HPSS user community gathered in Livermore.

Collaborations Data Movement and Memory Developer Support Events Storage, File Systems, and I/O

Source: LLNL Computing

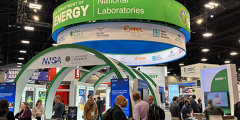

Computing advances highlighted at DOE’s SC25 exhibit booth

During the weeklong conference, attendees visiting the Department of Energy’s booth were treated to two technical demonstrations and a talk by LLNL staff.

AI/ML Algorithms at Scale Biology/Biomedicine Computational Science Containerization Data Science Events Exascale HPC Systems and Software Lasers and Optics Natural Language Processing Resource and Workflow Management Scientific ML

Source: YouTube

Video: How supercomputers are transforming research in cancer, dark matter, and seismology

Researchers are using LLNL’s unclassified supercomputers to delve into complex scientific questions such as uncovering how protein interactions are linked to cancer, shedding light on mysterious dark matter, and understanding the shifting dynamics of seismic waves.

Biology/Biomedicine Collaborations Computational Science HPC Systems and Software Multimedia Seismology Space Science

Source: YouTube

Video: 2025 LLNL highlights

In 2025, LLNL built on our legacy of groundbreaking scientific and technological innovation with the help of partners from throughout the federal government, nuclear security enterprise, the national laboratory system, industry, and academia.

Source: LLNL Computing

CASC Newsletter | Vol 16 | December 2025

Highlights include innovative solutions for contact mechanics, HPC optimization, quantum dynamics, and carbon capture.

Compiler Technology Computational Math Computational Science Data Science Data-Driven Decisions Discrete Mathematics Earth Systems Emerging Architectures HPC Systems and Software Hybrid/Heterogeneous Materials and Manufacturing Mathematical Optimization Open-Source Software PDE Methods Performance, Portability, and Productivity Programming Languages and Models Quantum Computing Software Engineering Solvers

Source: LLNL News

LLNL caps SC25 with HPC leadership, major science advances and artificial intelligence

LLNL’s presence, which included dozens of sessions, including tutorials, workshops, paper presentations and birds-of-a-feather meetings was felt across virtually every major event of the week.

AI/ML Awards Community Computational Math Computational Science Data Science Events Exascale HPC Systems and Software Scientific ML

Source: YouTube

Video: The perfect metaphor for AI security

How do we keep artificial intelligence safe and secure as it advances at breakneck speed? This video explores the risks of AI systems powering today’s chatbots, virtual assistants, and more.